NForge v1.1.0 introduces a complete robotics integration layer designed for the Galbot G1 humanoid robot. This release bridges the gap between computational neuroscience and physical robotic systems, enabling humanoid robots to understand and respond to human emotional states by decoding predicted brain activity patterns in real time.

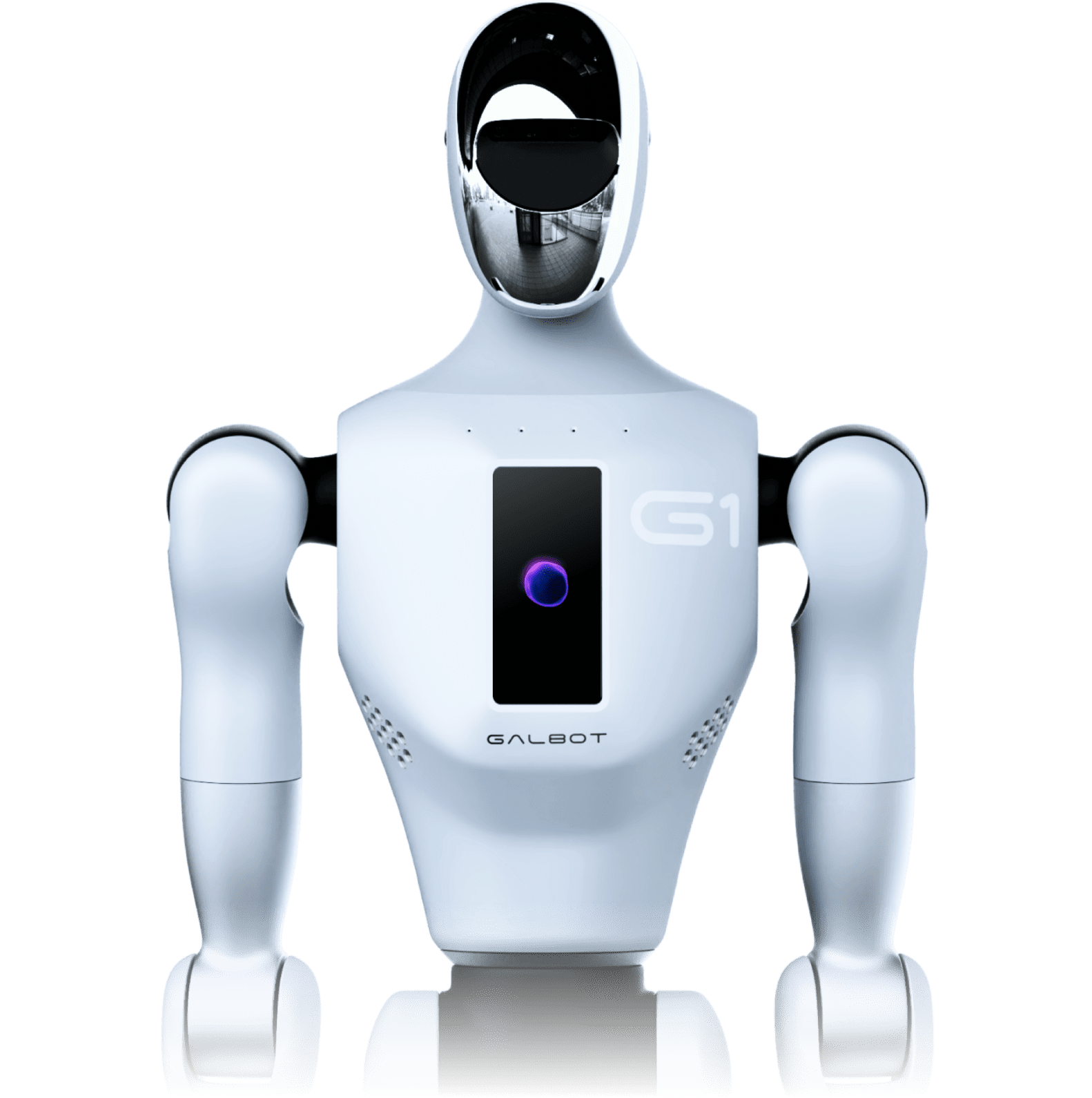

⚙ Powered by Galbot G1

The Galbot G1 is a 173cm general-purpose humanoid robot with a 4-microphone array, RGB-D cameras, tactile sensors, and 8-core AI processing. Its 95%+ grasping accuracy and 10-hour runtime make it the ideal platform for sustained therapeutic interaction. NForge v1.1.0 bridges computational neuroscience and physical robotics — giving the G1 the ability to understand human emotion at the neural level.

The centrepiece of this release is the Emotional Therapy pipeline — a closed-loop system where the Galbot robot observes a patient via its cameras and microphones, NForge predicts the cortical response patterns those stimuli would evoke, an emotion engine decodes valence/arousal/dominance from the predicted brain state, and the robot adapts its behaviour to provide optimal therapeutic interaction.

Emotion Engine

Maps NForge cortical surface predictions to three emotional dimensions — valence (positive/negative), arousal (calm/excited), and dominance (submissive/dominant) — using neuroscience-grounded ROI mappings. The engine averages activation across HCP MMP1.0 brain regions associated with each dimension: orbitofrontal cortex for valence, temporal pole and anterior insula (amygdala proxies) for arousal, and frontal operculum for dominance. Outputs a confidence-weighted EmotionState at each timestep.

Robot Control Bridge

Translates decoded emotion states into Galbot G1 action commands across four channels:

speech_tone (calm, warm, energetic, concerned),

facial_expression (neutral, smile, empathy, concern),

gesture (nod, open_palms, lean_forward, thumbs_up),

and approach_distance (0.8–1.8m adaptive proximity).

Built on an abstract RobotBridge base class — swap in any robot platform by implementing a

single translate() method.

Biometric Fusion

Accepts wearable EEG (10-20 system, up to 17 channels) and fNIRS (8 source-detector pairs)

sensor data and projects it into NForge's feature space via a learned linear transform.

The fused "biometric" modality tensor plugs directly

into StreamingPredictor.push_frame(), enabling real-time therapy sessions where the robot

receives both environmental perception (camera/microphone) and direct physiological brain

signals simultaneously.

Therapy Session Manager

Orchestrates full therapy sessions by logging timestamped emotion states and computing engagement metrics: emotional stability (valence consistency), engagement score (arousal magnitude), and progress (valence trend slope via linear regression). The session manager tracks emotional trajectory and provides trajectory-aware action suggestions — if a patient's valence is declining, the robot automatically shifts to gentler tones and open-palm gestures. Full session reports export as JSON for clinical review.

| Integration Architecture

$ Quick Start

from nforge.inference.streaming import StreamingPredictor

# Initialize pipeline

engine = EmotionEngine.from_hcp_labels()

bridge = GalbotBridge()

session = TherapySession(patient_id="patient_001")

# For each streaming prediction...

prediction = streaming_predictor.push_frame(features)

if prediction is not None:

emotion = engine.process(prediction)

session.log(emotion)

action = session.suggest_action(bridge)

# action = {"speech_tone": "warm", "gesture": "nod", ...}

# Export session report

session.save_report("sessions/patient_001.json")